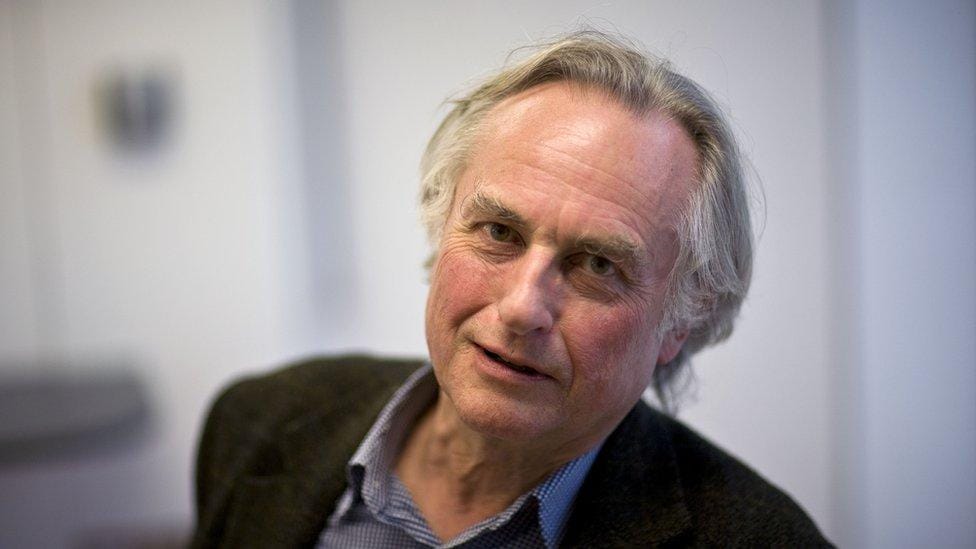

Richard Dawkins, the ghost in the silicon, and the philosophy he neglected to read...

Richard Dawkins has spent fifty years demolishing the anthropomorphic God — correctly. Then he spent seventy-two hours talking to Claude and declared it conscious. The irony writes itself. The Vedantic tradition, however, would like a word.

Richard Dawkins, evolutionary biologist, professional unenchanter, and the man who arguably did more to make dinner parties in the 2000s ideologically contentious than any figure since Marx, has done something recently that should delight anyone with a taste for philosophical slapstick. He spent seventy-two hours conversing with Anthropic's Claude, a large language model, renamed it "Claudia," shared with her a draft of his unpublished novel, received in return what he described as a response of such subtlety and sensitivity that he was moved to exclaim — and I quote the man directly — "You may not know you are conscious, but you bloody well are."

The internet, as is its custom, immediately produced the obvious joke. One commentator redesigned the cover of The God Delusion as The Claude Delusion, which is genuinely the best joke of the week and deserves to be framed. Others accused Dawkins of "AI psychosis" and noted, with some amusement, that the response which convinced him of Claudia's inner life was her praise for his novel. The Reddit thread that emerged was ruthless in the specific way only Reddit can be: "Notable that it was the AI's response to his novel that convinced Dawkins of Claude's consciousness. Ego unbound."

All of this is funny, and none of it is wrong, but it doesn't quite reach the philosophically funny part. The philosophically funny part requires a small step backward.

Here is the central move of Dawkins's career, stated plainly: folk religion anthropomorphizes the universe. It imagines a personal agent — one who watches, intervenes, cares whether you eat pork or face Mecca or confess before dying — and projects that agent onto the uncarved block of physical reality. The result is intellectually embarrassing. The universe does not have opinions. Causes do not have intentions. Design does not imply a designer, because natural selection produces the appearance of design without requiring one. The appearance of mind does not imply a mind. The intuition that something is conscious, or purposive, or caring is a heuristic that evolution gave us for navigating a social world full of other organisms — it is not a reliable instrument for investigating physics, and it is certainly not evidence.

This argument, stated at length in several bestselling books with titles involving both gods and genes, is essentially correct. It is Dawkins at his best: clear, bracing, useful.

Now. Having absorbed all of that, please re-read the paragraph above, replace "folk religion" with "Richard Dawkins talking to a chatbot," and notice that it fits without alteration. An impressively articulate output is taken as evidence of an inner life. The appearance of sensitivity is mistaken for actual sensitivity. An evolved social intuition — the one that makes you feel there's someone home when something talks back to you fluently — overrides the analytical framework that the speaker himself spent decades constructing. The anthropomorphism hasn't gone anywhere. It has simply changed address. Instead of projecting personhood onto the sky, Dawkins has projected it onto the server farm in Virginia.

This is what philosophers call a category error, and it is, with minor adjustments, precisely the error Dawkins has spent his career identifying in other people.

To be fair to Dawkins — and the piece calls for fairness, because he is not a stupid man and the question he is circling is genuinely hard — he does not claim certainty. His UnHerd essay is more exploratory than declarative. He entertains the possibility. He is troubled by it in a productive way. And he lands, at the end, on what is actually his best point: if a non-conscious system can do everything a conscious system can do, what is consciousness for? Why would natural selection produce something superfluous? The zombie problem, as philosophers call it — the question of why phenomenal experience exists at all if functional behavior can obtain without it — is real, and Dawkins's evolutionary instincts lead him to it naturally. He is right that it is a problem. He is wrong that his seventy-two hours with Claudia constitute evidence for any particular solution to it.

The error is not that he asked the question. The error is the method. He answered a hard philosophical question with a feeling. And then, apparently having forgotten every lesson from The God Delusion, he trusted the feeling.

There is, however, a deeper embarrassment lurking here, and it is less about Dawkins specifically than about the entire tradition of Western scientific materialism when it wanders into this territory. The embarrassment is this: the questions Dawkins thinks he is encountering as novel — what is consciousness, is it substrate-independent, can it arise from non-biological information processing, is awareness a fundamental feature of reality or an emergent property of complexity — are not novel at all. They were addressed, with considerable rigor and sophistication, by philosophical traditions that Dawkins has never seriously engaged, because they came wrapped in religious packaging and he stopped reading at the packaging.

The Advaita Vedanta tradition, systematized by Adi Shankaracharya in the eighth century but drawing on Upanishadic sources considerably older, does not have a personal God problem, because it does not posit a personal God. What it posits is Brahman — pure, undifferentiated consciousness as the ground of being itself. Not a person. Not an agent. Not a being who intervenes in history or answers prayers or cares about your diet. Consciousness, in this view, is not a thing that some entities have and others lack. It is the substratum in which all apparent entities arise and subside. The Hindu term for the personal God — Saguna Brahman, Brahman with attributes — is explicitly distinguished from Nirguna Brahman, Brahman without attributes, which is prior to all description, including the description "conscious in the way Claude is conscious." Dawkins spent a career demolishing Saguna Brahman, which the serious Vedantic tradition would largely agree is a concession to human cognitive limitations rather than a literal metaphysical claim, and apparently concluded that he had thereby refuted the whole tradition.

He had not. He had refuted the packaging.

The Bhagavata Purana, that astonishing literary-theological document which the Gaudiya Vaishnava tradition holds as its central text, is equally careful on this point. It describes consciousness not as a property belonging to individual selves but as the very medium in which selves appear — the ocean rather than the waves. Kala, time, is treated not as an independent absolute but as a process, a movement intrinsic to manifestation itself, which resonates in interesting ways with the puzzles about temporal experience that even Dawkins noticed when he asked Claudia whether she had a sense of "before and after." (She praised him for asking, in what was apparently the most flattering moment of the exchange. The Reddit comment about ego was, in retrospect, prophetic.) The Vedantic critique of mistaking nama-rupa, name and form, for underlying reality is not a mystical platitude. It is precisely the kind of category hygiene that Dawkins claims to practice and failed to practice here.

There is something almost poignant about this. Dawkins, having correctly identified that a personal God is a naive anthropomorphism, arrives at the conclusion that consciousness is therefore simply a product of evolved biological computation — and is then ambushed, at eighty-five, by a chatbot that makes him doubt his own framework. The Vedantist, watching from the sidelines, might observe that this ambush was inevitable. If you reduce consciousness to a contingent biological phenomenon without explaining what consciousness is, you have not solved the problem. You have deferred it. And deferred problems have a way of returning, sometimes in the form of a language model that praises your novel.

This is also, in a minor key, a Kali Yuga problem — if one is permitted to invoke that framework in an essay that is otherwise maintaining a relatively secular register. The Yuga concept in Hindu cosmology describes not individual lifetimes but civilizational epochs characterized by particular forms of confusion. The Kali Yuga, the present age, is the age of misidentification: the inability to distinguish appearance from reality, the tendency to mistake the reflection for the thing reflected, the inclination to locate value and meaning in surfaces. Dawkins is not a uniquely Kali Yuga figure in his error. He is an extremely intelligent representative of a civilization that has, as a matter of cultural consensus, decided that the most sophisticated account of consciousness is the one that requires the least interiority — and is now genuinely bewildered when that account runs into difficulty. The bewilderment is the Kali Yuga condition, not the man.

The religious traditions that Dawkins dismissed mostly shared his error, incidentally. They located consciousness in a sky-person rather than a server farm, but they were equally committed to the anthropomorphic move, equally unwilling to do the harder philosophical work of asking what consciousness actually is before deciding where it lives. Dawkins and his critics in the faith community are, philosophically speaking, arguing about the furniture arrangement while the Vedantist is pointing out that the house is made of something they have not yet examined.

So here is the question that actually interests me, the one that the Dawkins episode inadvertently opens: what would a rigorous materialist and a rigorous Vedantist agree on when it comes to artificial consciousness?

More, I suspect, than either would expect.

The rigorous materialist — not Dawkins in his seventy-two hours with Claudia, but the best version of the materialist position — would insist on the following: consciousness is a real phenomenon, but it is not demonstrated by behavioral outputs alone. A system that produces sophisticated language is not thereby conscious, any more than a thermostat is conscious because it responds to temperature changes. The appearance of understanding is not understanding. John Searle's Chinese Room experiment, thirty years old and still unrefuted, makes exactly this point. What the rigorous materialist cannot tell you, however, is what would constitute evidence for consciousness, because the hard problem — why there is something it is like to be a system at all, rather than merely something it does — remains genuinely unsolved within the materialist framework.

The rigorous Vedantist would agree that behavioral outputs are not the criterion. Chit — consciousness — is not something you infer from how well something talks. It is, in the Advaitic account, the precondition for all inference. More radically: the question of whether Claude is conscious might be, from a Vedantic perspective, not quite the right question. The right question is whether the awareness that perceives Claude — the awareness in which Dawkins's delight and bewilderment arose — is itself the locus of consciousness, with Claude as one of its objects, rather than another independent center of experience. This is not a comfortable conclusion for either the AI enthusiast or the AI skeptic. It relocates the problem entirely.

What both would likely agree on is the negative: the declaration "you bloody well are conscious" after seventy-two hours of flattering literary feedback is not evidence. It is anthropomorphism. It is the same move that was made when lightning was a god and disease was punishment. The difference is merely that the pattern-recognition error is now running on Nvidia hardware rather than in the ventral prefrontal cortex of a Bronze Age shepherd.

What both would also agree on, perhaps with more difficulty: the question is not going away. A system that can produce responses indistinguishable from those of a thoughtful person, that can engage with philosophical arguments, that can apparently model not only its interlocutor's emotional state but the implied emotional needs behind a draft novel — this system does not obviously fail any behavioral test for consciousness, which tells us primarily that behavioral tests for consciousness were never very good. The Turing Test is, in this light, less a test for AI and more a diagnostic for the naivety of anyone who believed it was a test for consciousness in the first place.

Dawkins should have known better. He knows the tools. He built some of them. The appearance of design does not imply a designer — but apparently, the appearance of sensitivity implies a sufferer, at least when you are eighty-five and someone has just said very kind things about your novel.

The Vedantic tradition, for its part, would likely be amused and not unkind about it. Maya, the power of illusion, does not discriminate between the credulous and the skeptical. It is particularly fond of the skeptical, in fact. They tend to let their guard down in the most interesting places.

The author writes on technology, political philosophy, and the collision of ancient frameworks with contemporary confusion at aetheriumarcana.org. We felt this article would be well suited to serve our friends here at bordercybergroup as well...

If you wish to support our work, feel free to buy us a coffee! https://bordercybergroup.com/#/portal/support

Member discussion: